The Executive’s AI Due Diligence: 7 Critical Non-Negotiables

Your CEO told you to “do AI.” Now what?

Across industries, leadership teams are under pressure to deliver meaningful AI outcomes—fast. But most AI initiatives don’t fail because the models aren’t capable. They fail because companies don’t do their AI due diligence: they adopt tools without the foundations necessary for scale, governance, or measurable ROI.

If you’ve been handed an AI mandate, this guide gives you seven non-negotiables every leadership team must demand from any AI solution. These principles cut through vendor hype and provide a due-diligence framework that holds pilots, platforms, and partners accountable to best-practice standards… not wishful thinking.

Use this checklist before you make commitments that lock you into expensive, inflexible, or ungovernable AI deployments.

The Seven Non-Negotiables of AI Due-Diligence:

1. LLM & Tool Agnosticism

Don’t buy a model—buy an architecture.

AI changes monthly. Your strategy should never depend on a single provider’s roadmap, pricing decisions, or outages.

An effective AI stack must be able to:

- Run multiple models from multiple vendors

- Switch or route tasks across providers based on cost and capability

- Incorporate new models without rework or massive re-training

Why it matters: In January 2025 alone, OpenAI released GPT-4.1, Anthropic shipped Claude 3.7, and DeepSeek-R1 disrupted pricing expectations. Organizations locked into a single vendor absorbed cost increases, capability gaps, or both.

Red flag: Platforms with rigid architectures that can’t adapt to new models without significant rework or vendor involvement.

Ask vendors:

“Show me this same task running across two different LLMs. What’s the cost and performance difference, based on tasks my team actually performs (not industry benchmarks)? How long does it take to add a new provider?”

This principle is so critical that we dedicated an entire analysis to it. Model lock-in isn’t just a technical risk; it’s a strategic liability that compounds over time. For a deeper dive into how vendor dependence limits innovation and creates hidden costs, read our full article: Why Companies Need LLM-Agnostic AI.

2. Persistent Knowledge (Not Disposable Chats)

If your prompts and conversations disappear, so does your ROI.

AI conversations typically live inside siloed chat windows and vanish when a project ends or a user leaves. The result:

- Recreated prompts

- Lost decisions and context

- Repeated work across teams

- No institutional memory

Leaders should demand the ability to save, search, tag, organize, and reuse prompts, notes, threads, and outputs—just like any other strategic knowledge asset.

Why it matters: A sales team that rebuilds the same qualification prompt 47 times isn’t using AI; they’re fighting it. Organizations that treat AI outputs as permanent records unlock compounding value.

What good looks like: Searchable libraries, version history, tagging by project or department, and the ability to clone proven workflows.

3. Context Control & Recovery

AI without control is AI without reliability.

Every regular AI user has seen it: a conversation is going well… until one bad prompt or response derails everything. Most tools offer no way to recover the productive state; you just start over.

Enterprises need:

- Direct editing of what the AI “knows” at any point

- The ability to remove confusing or off-topic context

- Versioning or forking when conversations drift

- Ways to compare two different reasoning paths side-by-side

Why it matters: When a $200K analysis depends on a 40-message thread, you can’t afford to lose control at message 38. Context control turns exploratory work into auditable, repeatable processes.

Red flag: “Just start a new chat” is not a good business strategy.

Ask vendors:

“Can we edit context mid-conversation? Can we fork a thread from any previous point and explore two paths? Show me how that works.”

4. Integration with the Data You Already Own

Stop copy-pasting. Start generating value.

Most organizations already have the data needed for powerful AI outcomes: CRMs, project documents, SOPs, knowledge bases, spreadsheets, and databases. The problem? AI tools treat this data as if it doesn’t exist.

AI solutions should integrate directly so employees can:

- Ask natural-language questions against internal data

- Retrieve and summarize documents without exporting

- Connect AI outputs into existing workflows (Slack, Salesforce, email)

- Validate AI-generated answers against real information

Why it matters: The fastest path to ROI isn’t building new data pipelines. It’s unlocking the value of what you already have.

A customer success team that can query three years of support tickets in plain English finds insights in minutes, not months. A finance team that can ask “Which vendors exceeded budget last quarter?” without SQL gets answers in seconds, not spreadsheets.

What good looks like: Native connectors to Google Drive, SharePoint, Notion, Salesforce, databases, and REST APIs… without custom engineering.

(For more on this principle, see our article While Others Waste Billions… Use Existing Data You Already Own.)

5. Cost Visibility & Guardrails

Predictable spend → predictable scaling.

As organizations adopt multiple models across multiple teams, costs can spike without warning. A single developer testing GPT-5.1 on a large dataset can burn through $10K in an afternoon.

Leaders need:

- Real-time usage visibility across all providers

- Budgets and quotas by team, user, or project

- Alerting before overages, not after

- Cost-per-task analytics to identify which models deliver the best value

Why it matters: Without this, CFOs lose patience, budgets get frozen, and pilots never graduate to enterprise programs. With it, you can confidently scale knowing exactly what growth costs.

Red flag: Vendors who only show aggregate monthly billing with no breakdowns by user, project, or model.

Ask vendors:

“Show us cost by project, user, and provider. Show us an example alert when a team approaches 80% of their monthly budget.”

Cost control at scale requires more than dashboards; it requires strategy. For tactical approaches to reducing AI spend while maintaining quality, see our guide: Thriving Amid The AI Cost Crunch: Strategies for Success

6. Human-in-the-Loop Quality (Anti-Workslop)

AI is powerful. Without human discernment, it’s dangerous.

We’ve written before about “AI workslop“—the flood of mediocre AI content that creates rework, confusion, and downstream errors. Microsoft’s May 2025 layoffs hit software engineers hardest—they comprised over 40% of the cuts—coming just after CEO Satya Nadella disclosed that AI now writes up to 30% of their code. But someone still needs to review it.

To avoid AI workslop, organizations need:

- Built-in human review steps

- Clear evaluation rubrics (accuracy, tone, completeness)

- Approval gates before outputs leave a team

- Training materials that define “good” vs. “acceptable” vs. “redo”

Why it matters: You cannot scale AI if quality depends on individual vigilance. You can scale AI when quality is built into the process.

What good looks like: Configurable approval chains, feedback loops that improve prompts over time, and audit trails showing who reviewed what.

7. Security, Governance & Portability

Your data. Your governance. Your exit plan.

A mature program must do its AI due diligence to protect the business from vendor lock-in, data exposure, and compliance risk: especially under regulations like the EU AI Act (now in effect) and emerging regional (e.g. US state) AI laws.

Executives should require:

- Role-based access controls and full audit logs

- Clear data retention and deletion policies

- Exportability of all prompts, threads, and outputs (no hostage data)

- Support for private/VPC deployments

- Model allow-lists and usage policies at the org level

Why it matters: Anything less exposes the organization to regulatory risk today and makes it exponentially harder to migrate tomorrow.

Red flag: Vendors who claim “we don’t train on your data” but won’t put it in an SLA, or who make data export a manual support ticket.

Your Executive AI Due-Diligence Scorecard

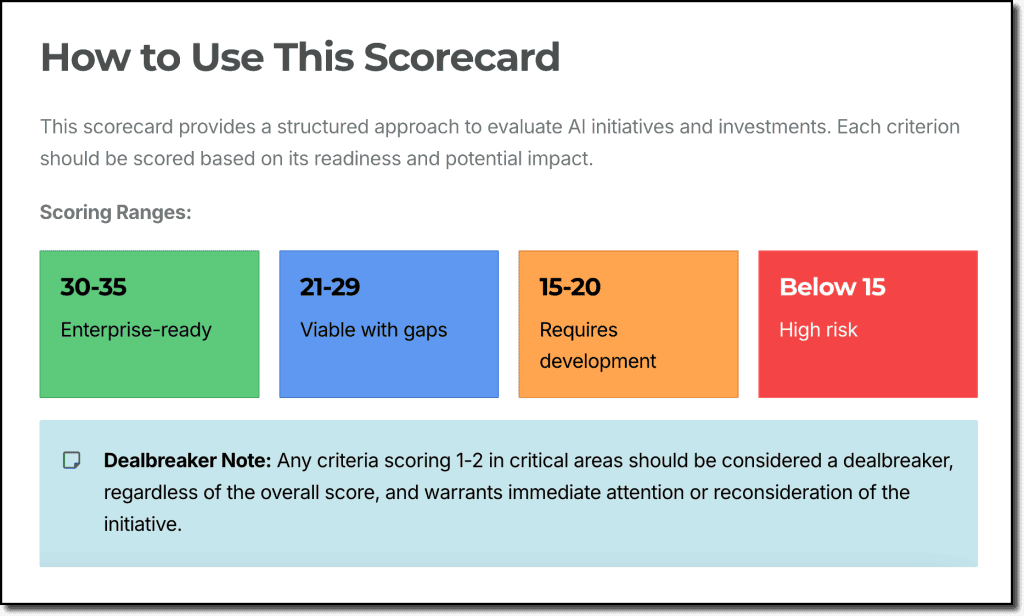

To help leaders evaluate AI tools quickly and objectively, we’ve created a simple scoring system for each of the seven non-negotiables.

Each criterion is rated 1–5:

- 5 — Enterprise-Grade = Full capability out-of-the-box with no configuration required

- 4 — Strong Capability = Minor limitations or configuration needed; production-ready with setup

- 3 — Partial Capability = Present but requires workarounds or manual processes

- 2 — Minimal Capability = Requires significant custom development or brittle integrations

- 1 — Absent/Locked = Nonexistent, roadmap-only, or fundamentally unavailable

Example scoring for LLM Agnosticism:

- 5 — Full Agnosticism = Runs multiple LLM providers natively; easy routing and switching; supports future models without rebuilds or re-engineering.

- 4 — Strong Flexibility = Supports several providers; switching requires minor configuration; limited provider-specific constraints.

- 3 — Partial Flexibility = Supports 2–3 models but with restrictions; switching requires developer work or context loss.

- 2 — Minimal Flexibility = Primarily locked into one provider; integrations available only via custom code or brittle plugins.

- 1 — No Flexibility = Single-model lock-in with no ability to swap or add alternatives.

Use this scorecard in your next vendor call or internal strategy review to quickly identify gaps and strengths.

[Free Download: Executive AI Due Diligence Scorecard] (PDF)

The Bottom Line: Don’t Just Ship AI; Ship AI That Scales

Boards want rapid ROI.

Executives want clear governance.

Teams want proven reliability.

CFOs want cost predictability.

These seven non-negotiables give you the criteria—and the confidence—to deliver all four.

In future articles, we’ll explore how a new generation of AI platforms is operationalizing these principles for real teams in real workflows. It’s time for AI to evolve from disconnected chats into durable business intelligence.

If you’re making AI decisions this quarter, start here. And if you need help evaluating solutions or building a deployment roadmap, our team is here to help.

Article Sources

- Why Companies Need LLM-Agnostic AI (2025), Paleotech AI

- While Others Waste Billions… Use Existing Data You Already Own (2025), Paleotech AI

- Thriving Amid The AI Cost Crunch: Strategies for Success (2025), Paleotech AI

- AI Workslop Destroys $9M in Productivity (2025), Paleotech AI

- Programmers bore the brunt of Microsoft’s layoffs in its home state as AI writes up to 30% of its code (2025), TechCrunch