Why Companies Need LLM-Agnostic AI

We frequently talk with CTOs who’ve just been handed the same marching orders: “Do AI.” Boards and CEOs are reading headlines, they’re watching competitors experiment, and they want in (even if they were initially reluctant). The pressure is increasing.

But here’s the uncomfortable truth: according to a recent MIT report, 95% of AI pilots fail to deliver measurable ROI. Not because leaders aren’t investing. Not because models aren’t capable enough. The failures occur in part because organizations become locked into static systems that can’t adapt as AI evolves. Don’t bet on one model. This is exactly why companies need LLM-agnostic AI.

One of the biggest lessons (but by no means the only lesson) we’ve learned here at Paleotech AI from working with our clients is this: an excellent way to avoid becoming part of that 95% is to design your AI architecture to be LLM-agnostic from the start.

That means you don’t bet everything on one model or one vendor. Instead, you create a flexible foundation that allows you to swap models in and out, run multiple models in parallel, and route tasks intelligently. Done right, this approach buys you resilience, adaptability, and better ROI.

So why does this matter?

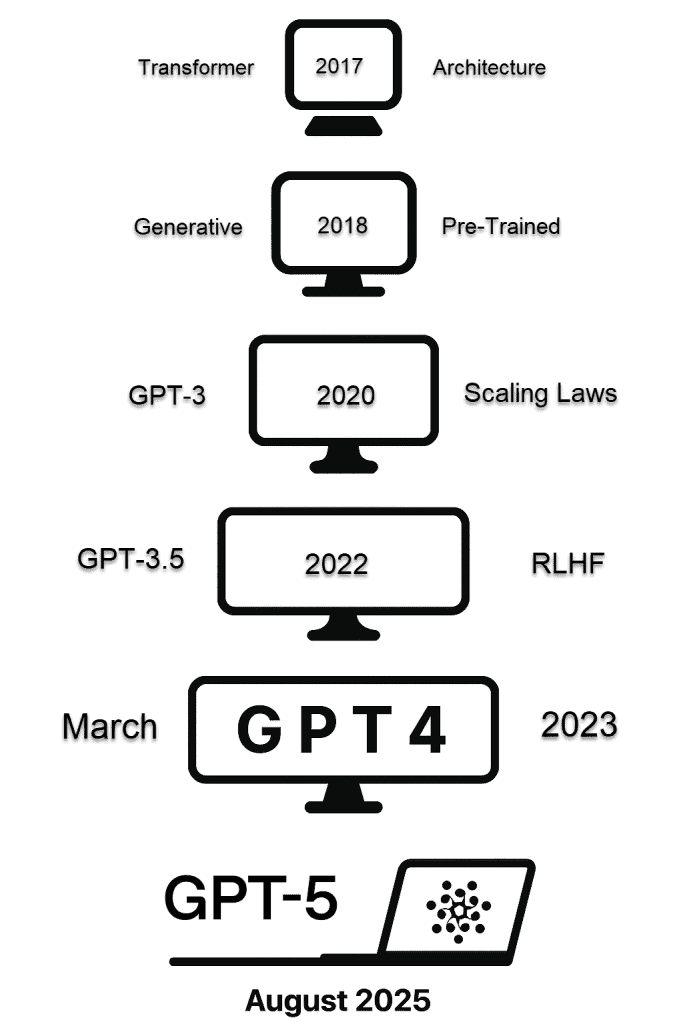

Models Change Faster Than You Can Build

The Generative AI landscape is moving at warp speed. A model that dominates today might be eclipsed by another in a key way in just a few months. We’re in the midst of one of the most explosive technology innovation cycles ever. Eight months ago, the LLMs we were using for coding and building AI solutions are primitive compared to the models available today, just months later.

If you’ve locked your systems to one LLM provider, you’ll be stuck with their roadmap, even if their model falls behind. But if you’re LLM-agnostic, it makes you future-proof. You can switch in new leaders as they emerge, without expensive rebuilds or long delays. Think of it as hedging your bets in a market where the only constant is change.

In our work, we’ve seen this play out firsthand. For a government-sponsored food research system, we initially chose an OpenAI model because it excelled at synthesizing complex scientific literature and generating insightful research questions.

For months, it was delivering exactly what they needed—pulling connections across hundreds of papers, identifying research gaps, and proposing novel hypotheses.

Then, both Anthropic and Google released updated models with dramatically improved reasoning capabilities and an enhanced ability to generate coherent, engaging narratives. After incorporating those models into the client’s workflow, the quality of research insights jumped noticeably—more nuanced analysis, better identification of methodological flaws, and research questions that were both more specific and more actionable.

Because we’d built their system to be model-agnostic, switching took a few hours instead of rebuilding the entire research pipeline. Now we can continuously optimize—switching to the best model for analyzing industry and academic reports, another for web searches, and another for synthesizing perspectives into answers for research questions—easily adapting as the technology evolves.

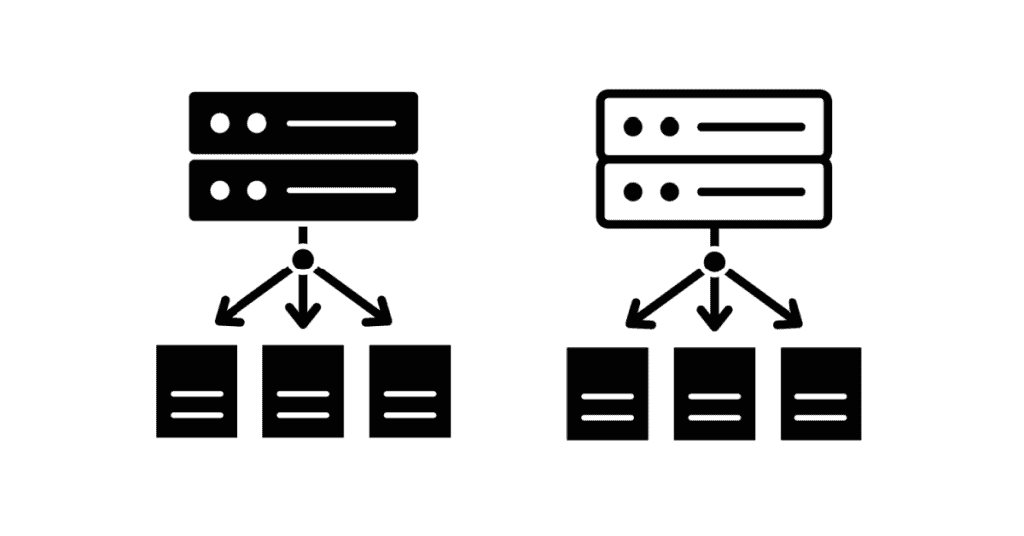

Multi-Model Is Stronger Than Single-Model

Think of LLMs like specialized experts. One might be great at writing code, another at generating marketing copy, another at multilingual translation. So why settle and ask one “generalist” to do everything? Even if the market stopped evolving tomorrow, no single LLM would be the best at everything.

An LLM-agnostic system lets you synthesize strengths across multiple models. For example, in retrieval-augmented generation (RAG) setups, you can pull information through one model, validate it through another, and have a third handle content summarization and synthesis.

This isn’t just theoretical. We’ve helped companies (and our own organization) get higher-quality, more reliable results by orchestrating multiple LLMs behind the scenes. To the user, it feels seamless. Behind the scenes, it’s a symphony of models working together. As a bonus, this approach also builds user trust. When your people see consistently high-quality outputs, they use the system more — which is half the battle in AI adoption.

LLM Cost Optimization

Not all tasks deserve the same horsepower. Why pay premium prices when a basic model will do?

LLM pricing varies dramatically across providers. An LLM-agnostic setup means you can route lightweight tasks (like drafting emails or summarizing notes) to cheaper (or even free, open-source) models, while reserving premium models for high-stakes reasoning or compliance-heavy work.

This simple routing strategy can cut AI costs significantly while maintaining quality where it matters most.

LLM Uptime and Failover Matter More Than You Think

Here’s something the fancy vendor websites don’t tell you: no LLM has 100% uptime. Every provider has slowdowns and outages, not to mention usage limits.

If your business is locked to one model, you’re out of luck when it goes down or slows in performance one day.

For example, ChatGPT experienced several notable outages between March and August 2025, but the most severe and widely reported was the major global outage in June. On June 10th and 11th there was a massive global outage that affected ChatGPT (free and paid), the Sora image generator, and the OpenAI API. The incident was one of the most disruptive in ChatGPT’s history, lasting over 10 hours for many users and causing widespread workflow disruptions for both individuals and businesses.

And this situation isn’t unique to ChatGPT – downtimes have occurred for all the models at some point or another.

Similarly, if you hit LLM-determined rate limits for your organization’s account, your AI-powered features simply stop working. Unlike predictable scheduled maintenance, rate limiting can happen suddenly during peak usage periods.

It doesn’t matter if an outage is widespread or just limited to your own use of a model: if you’ve designed your system to be LLM-agnostic, you can create automatic failovers to move to another provider instantly. That’s the kind of resilience every company needs.

Vendor Risk Management

Vendor lock-in is risky. AI providers can (and do) change their terms, pricing, and feature sets overnight.

A perfect example is when OpenAI suddenly retired ChatGPT-4.0 and 4.1, forcing businesses that had heavily invested in those models to scramble and retool for GPT-5. For organizations that had tuned workflows and prompts specifically to 4.1, the switch created both operational disruption and unexpected costs.

If you’re tied to one LLM, you’re at your vendor’s mercy. But if you’re LLM-agnostic, you have the freedom to reroute workloads or swap in alternatives quickly, without losing months of work. In other words: you stay in control, not your LLM vendor.

Staying on the AI Innovation Frontier

Staying LLM agnostic keeps you nimble.

The AI market is exploding with new entrants — from open-source models to industry-specific specialists. If you’ve built your system to be flexible, you can try out new models quickly and integrate the new winners without tearing everything down.

This is how companies will stay competitive with Generative AI: by experimenting at low cost, adopting what works, and discarding what doesn’t. The organizations that can’t adapt will be left behind.

How You Can Get Started

You don’t need a massive team or budget to start thinking LLM-agnostic. A few practical steps:

- Ask the right questions. When you’re evaluating vendors or partners, go beyond the demo. Ask: Can we swap out models easily if something better comes along? How can you help us leverage the best model for each task, not just today but in the future? If a vendor can’t answer those questions directly, that’s a red flag. You want flexibility built into the architecture, not hidden lock-in that will cost you later.

- Optimize for cost and performance. At first glance, AI pricing looks cheap — a dollar for a million tokens doesn’t sound like much. But as implementations get more complex and require larger context windows, and your user base scales, costs can climb quickly. The key is to design processes that feed in only the minimum information needed for each task. When designing systems, break big workflows into smaller sub-tasks, and be selective about which ones really need premium LLM horsepower. Then, match each task with the model that delivers the right balance of performance and cost. This way, you can scale AI usage beyond pilots without getting blindsided by sticker shock.

- Measure outcomes, not benchmarks. Model benchmarks (leaderboards, accuracy scores, etc.) are useful for researchers — but they don’t tell you if AI is helping your business. Don’t fall for a sales pitch that leans too heavily on test scores. Instead, define what success means in your context: faster contract turnaround, fewer customer service escalations, or reduced BPO spend. Then measure against those. That’s how you know if your investment is actually moving the needle.

How Paleotech Can Help

At Paleotech AI, we’ve seen how fragile single-model deployments can be, and we strive for better outcomes for all our clients. That’s why every AI architecture we design and implement is LLM-agnostic by default.

Whether it’s an investment firm extracting details from dense legal documents or a retailer enabling non-technical staff to query databases in plain language for sales insight — we’ve helped companies build systems that adapt, not systems that get stuck.

Our goal isn’t to push one vendor or one model. It’s to help companies cross the AI ROI divide by designing for resilience, flexibility, and true business value. We’d be happy to share with you ideas on how AI can create lasting results for your business, too.

Closing Thoughts

The MIT report put it bluntly: the problem isn’t adoption, it’s learning. Systems that don’t evolve get stuck in pilots and never deliver long-term business value.

LLM-agnostic design is one of the most practical ways to help solve that problem from the start. It gives you the flexibility to adapt as models, vendors, and business needs change. If you’re a CTO under pressure to “do AI,” don’t just pick a model and hope for the best. Build an architecture that keeps your options open — because the only guarantee in the AI world right now is that the future will look very different than today.

Interested in seeing how the team at Paleotech AI might be able to help your business? Schedule a free consultation call.

Article Sources

- STATE OF AI IN BUSINESS 2025 ( 2025), MIT

- Powerful AI for Business in 2025 (Jan 2025), Paleotech AI